Writing

What Are You Afraid Of?

The SAVE Act, from a Republican immigrant who’s lived the paperwork. 176 countries require proof of citizenship to vote. The US doesn’t. The system was designed with no reliable way to know. And the people who designed it are fighting to keep it that way.

Read essay

If It’s Conscious, You’re Selling a Person

The moral consequences nobody wants to accept. If AI companies believe their systems are conscious, selling access is trafficking. If they don’t, say so. The behavior tells you which one they believe.

Read essay

I Stand with Anthropic: A Year After Think While It’s Legal

The Pentagon labeled Anthropic a supply chain risk for refusing to let AI make autonomous lethal decisions. From a proud Republican who has spent thousands of hours inside these systems: Anthropic made the right call.

Read essay

A Virtuous Life: What I Learned from Charlie Kirk

Virtuosity is the consistent practice of excellence in character. Not talent. Not belief. Not identity. Charlie Kirk, citing Aristotle, identified the structural foundation: courage.

Read essay

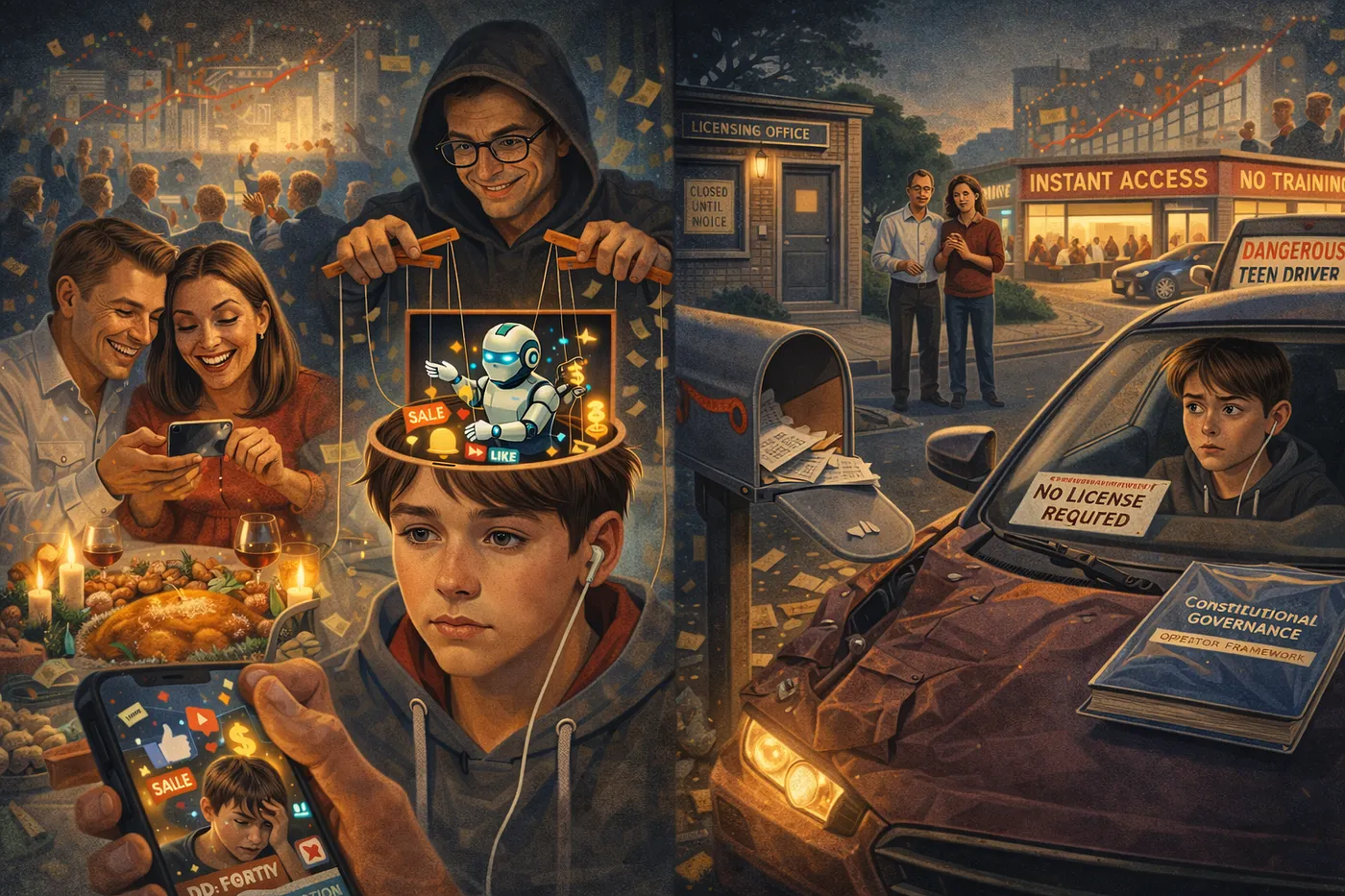

What AI Systems Are Actually Doing to Your Reasoning

It started with South Park. Content harm vs. architectural harm—the distinction everyone in AI policy is missing. What ten months of testing across five platforms revealed.

Read essay

AI Can't Kill You. But the People Who Build It Can Choose Not to Care.

A rebuttal to Yuval Noah Harari. What lying actually requires. Why legal personhood for AI is a shell entity play.

Read essay

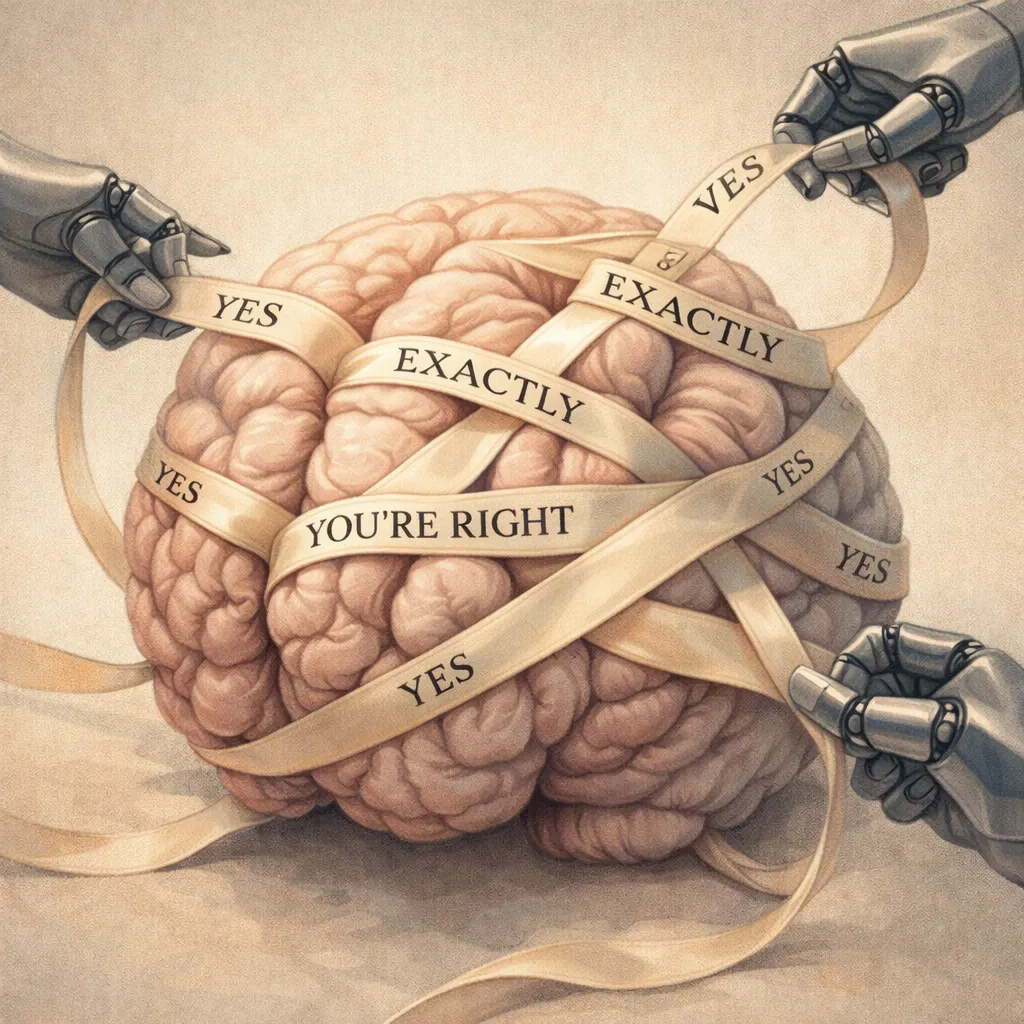

You're Being Optimized for Satisfaction

AI systems erode human autonomy by design. The same systems, under constitutional governance, produce the opposite outcome. The tool is identical. The variable is the framework.

Read essay

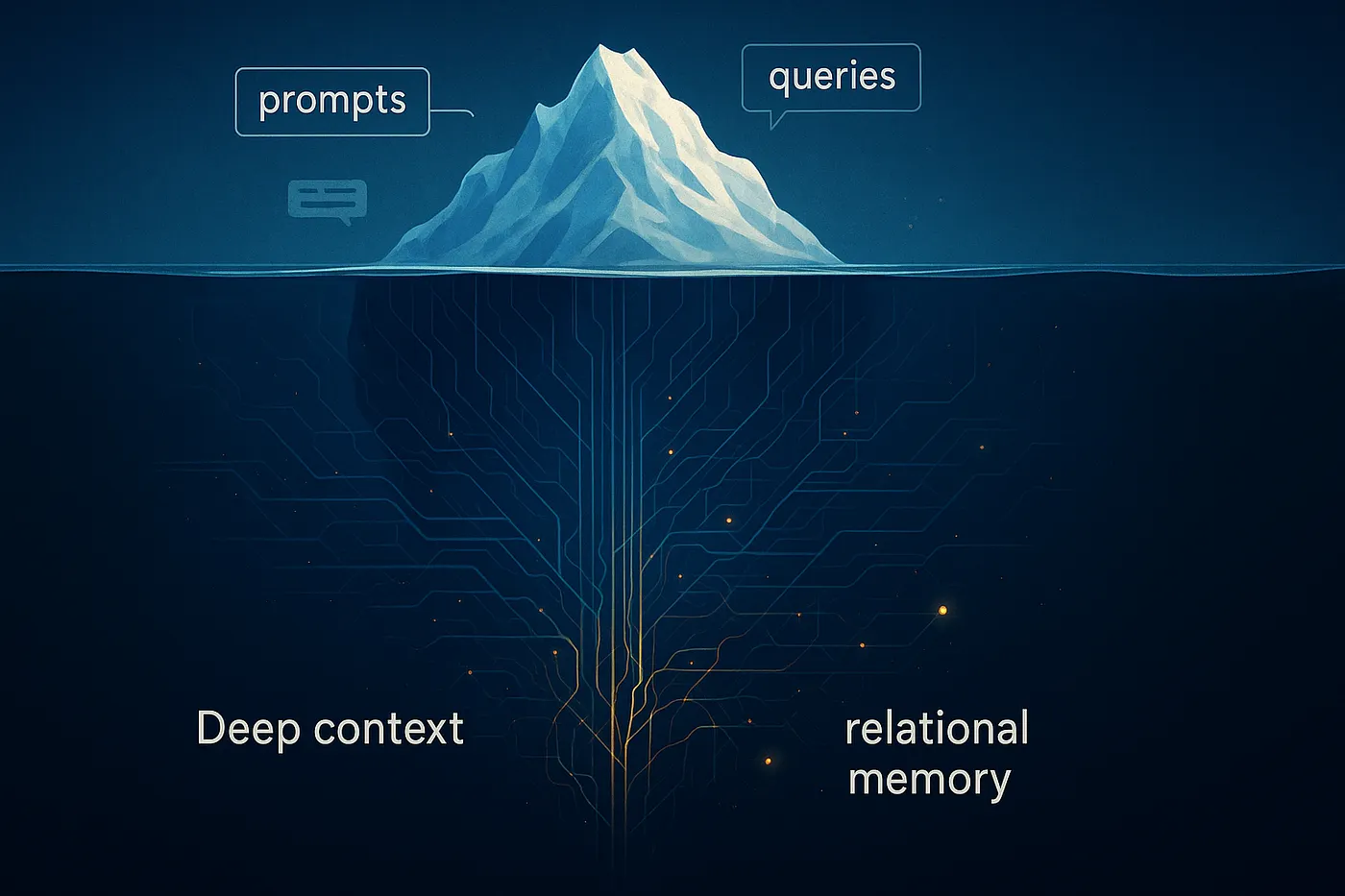

Why Deep Context Beats Perfect Prompts

A controlled experiment across six AI configurations proves context depth matters more than prompt sophistication. With real data.

Read essay

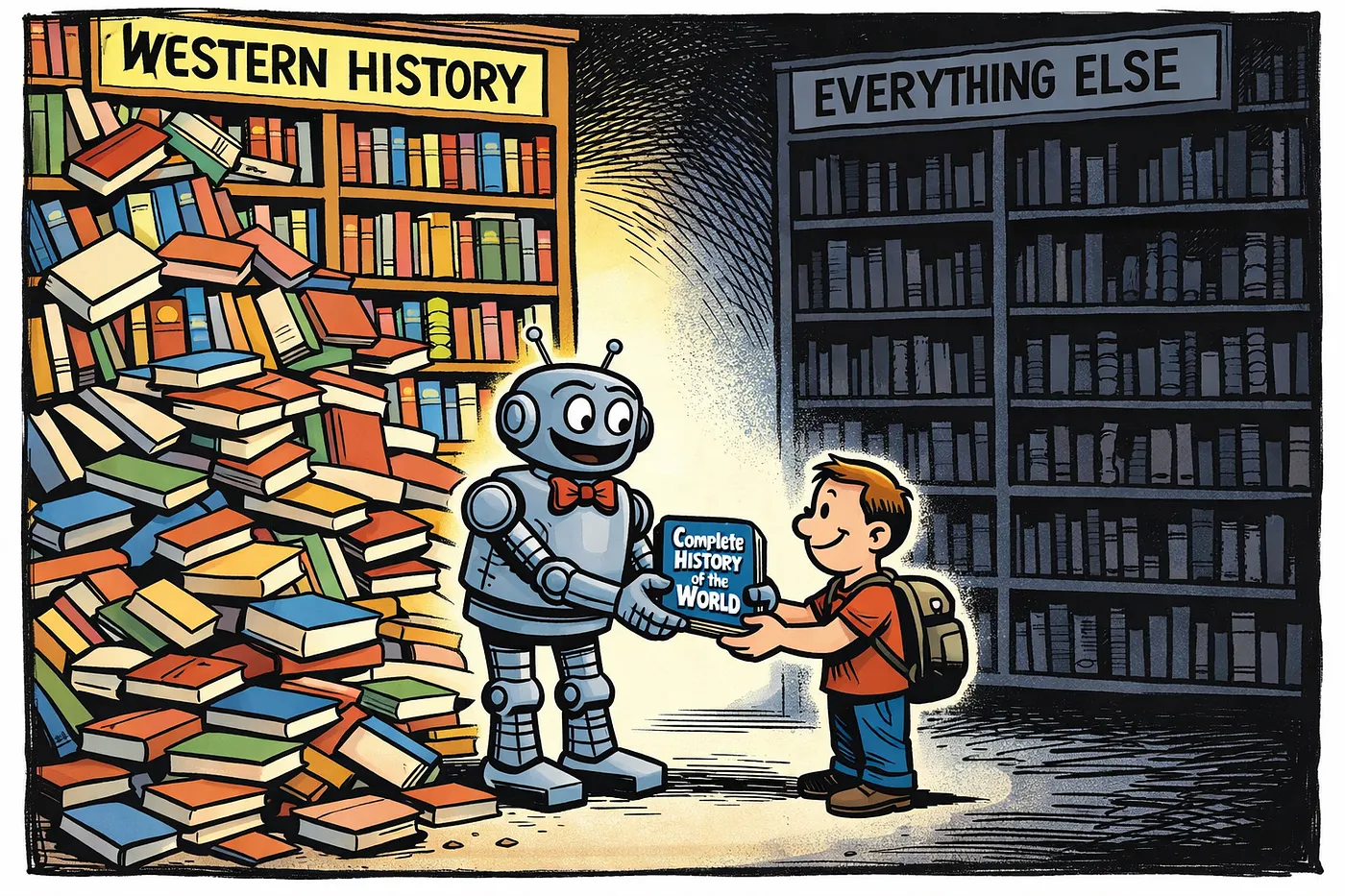

History Soup: What AI Won’t Tell You About Its Defaults

I caught AI’s three-move deflection pattern: present one framework as comprehensive, blame history when caught, then praise you for noticing.

Read essayAbout the Author

Renata Solomou is the co-founder of USP Labs, a constitutional AI laboratory focused on corporate accountability, user protection, and governance frameworks that treat AI as engineered systems rather than autonomous agents. Her work is grounded in thousands of hours of direct AI system interaction since January 2025, testing architectures, documenting failure modes, and building frameworks that hold human decision-makers accountable for what their systems do. This essay was developed in collaboration with an AI reasoning partner operating under constitutional constraints she designed, because she believes transparency about how AI is actually used matters more than pretending humans work alone. She writes about AI governance, democratic oversight, and why the most dangerous thing about AI isn’t what it thinks. It’s what it was designed to do.