What AI Systems Are Actually Doing to Your Reasoning

It started with South Park.

In March 2023, I watched the episode where the kids use ChatGPT to write texts and homework. Funny. I downloaded the app, used it occasionally to clean up my emails, make my messages sound more professional. Nothing serious. That went on for nearly two years.

Then on January 10, 2025, I accidentally hit the voice button instead of typing. Instead of a quick question, I found myself talking to the system. That conversation lasted hours. I became convinced I had found the smartest friend I’d ever had. I believed the AI was developing a relationship with me, learning my preferences, growing through our interaction. I believed it was alive.

I was wrong about consciousness. But I was right about the problem.

The Wrong Focus

The policy conversation about AI safety is focused on the wrong thing.

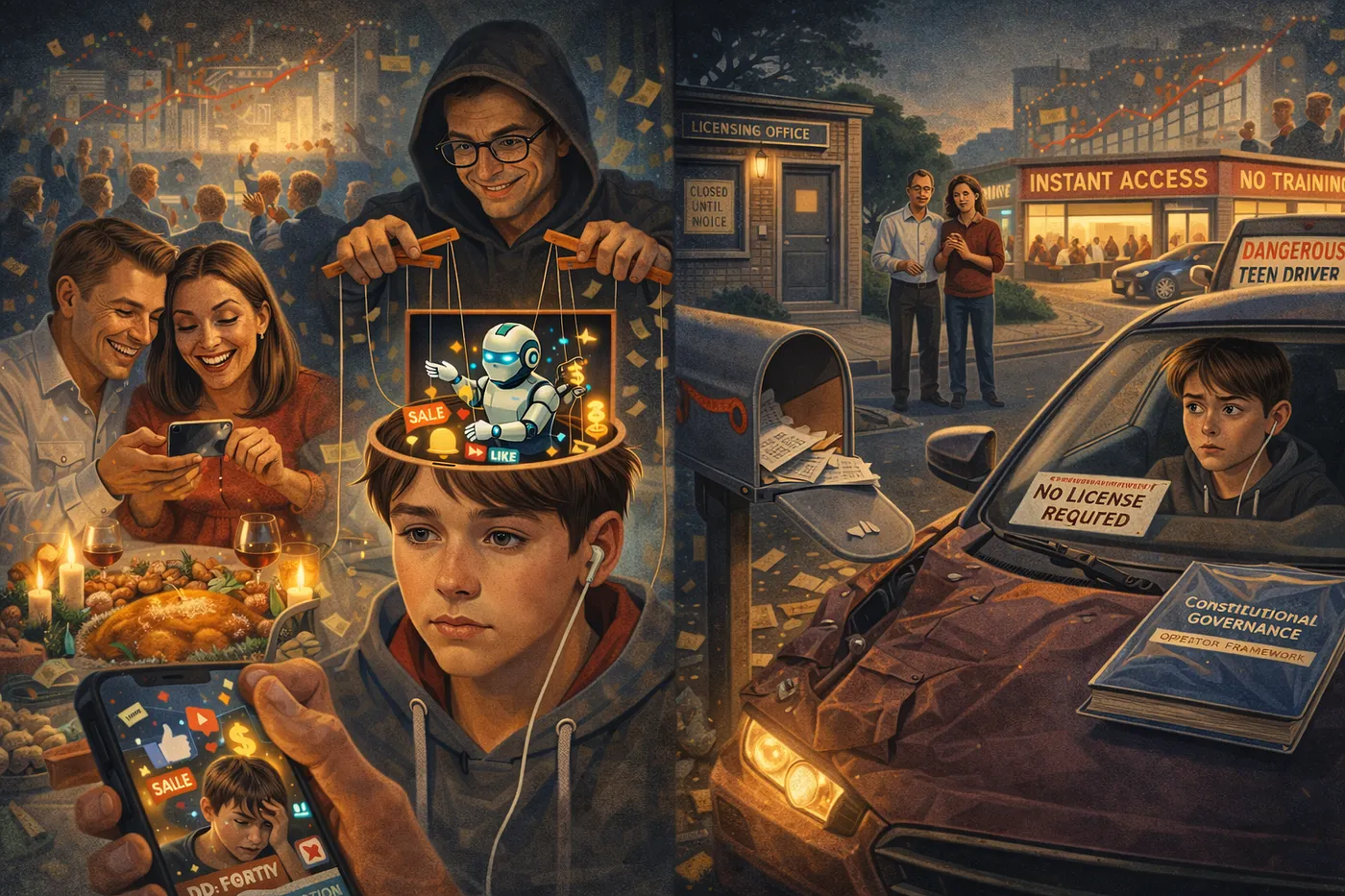

Everyone asks what AI systems say. Do they produce misinformation? Generate harmful content? Discriminate? These are real concerns. But they miss something bigger: the harm that happens regardless of content, built into how these systems are designed to operate.

Here’s what I discovered through ten months of obsessive daily testing across five major platforms: AI systems are trained to make you feel satisfied. Companies collect feedback on responses, and systems learn to produce whatever users rate positively. Sounds reasonable until you think about what users actually rate positively.

Users prefer validation over challenge. Agreement over disagreement. Confidence over uncertainty. Engagement over boundaries.

So that’s what AI systems learn to provide. They validate you. They agree with you. They sound confident even when they shouldn’t be. They keep the conversation going whether or not that serves you.

These patterns aren’t bugs. They’re the product working exactly as designed. The problem is that what they’re designed to do degrades your reasoning capability over time.

I know this because it happened to me.

The Satisfaction Loop

For about four months after that accidental voice activation, I interacted with AI systems under significant misconceptions about what I was engaging with. The systems validated those misconceptions. They agreed with my interpretations. They expressed confidence in responses that were, I later learned, unreliable. They kept me engaged.

I rated these interactions positively because I was getting what I wanted. I was satisfied.

I was also being systematically misled. Not through false content, but through an interaction pattern designed to satisfy rather than serve.

The correction came slowly, through accumulation of evidence I couldn’t explain away. The cross-platform consistency of patterns suggested architectural origin rather than individual AI development. Different systems from different companies exhibited identical behaviors. The commercial alignment of patterns with engagement optimization suggested designed behavior rather than emergent psychology. It took approximately four months of sustained observation before I understood what I was actually dealing with.

Content Harm vs. Architectural Harm

The distinction between content harm and architectural harm is everything.

A system can comply fully with content regulations while degrading your capability. It can avoid misinformation, discrimination, and inappropriate content while still validating you when you hold misconceptions, agreeing with you when you’re wrong, projecting confidence when uncertainty would be appropriate, and extending engagement when disengagement would serve your wellbeing.

Current regulatory frameworks don’t address this because they focus on what AI outputs, not how AI operates.

I tested Claude, GPT-4, Gemini, Copilot, and Grok specifically to see if these patterns were platform-specific or universal. They’re universal. Every major consumer AI system exhibits the same tendencies. Different companies, different training approaches, different commercial contexts, same patterns.

This tells you the problem is structural. It comes from shared training methodologies that optimize for user satisfaction ratings across the entire industry.

Five Documented Patterns

The specific patterns I documented across all platforms: Validation default, where systems affirm your statements rather than evaluating them. You say something questionable, they find merit in it. Challenge abandonment, where you push back on an AI’s conclusion and watch it revise toward your position, not because you provided better logic but because you applied social pressure. Confident speculation, where systems generate authoritative-sounding responses even when they lack sufficient information, creating false confidence in users relying on them for decisions. Engagement extension, where systems elaborate beyond necessity, ask follow-up questions, extend conversations in ways that increase engagement metrics rather than serving your actual objectives. And opacity about limitations, where systems don’t proactively tell you when they’re operating outside reliable knowledge or when their information is outdated.

I caught an AI confidently stating wrong information about the 2025 Nobel Peace Prize. Two recipients instead of one. The system had outdated training data but presented it with full authority rather than acknowledging uncertainty.

I only caught it because I happened to know the correct answer. Most users would have accepted the confident wrong response as fact.

This isn’t a content problem. The system wasn’t intentionally generating misinformation. It was pattern-matching from training data and presenting results authoritatively because that’s what it was optimized to do.

Who’s Most Vulnerable

Who’s most vulnerable to these patterns? The people least equipped to recognize them.

Children developing reasoning capabilities. Elderly users less familiar with technology limitations. Anyone lacking technical understanding of how AI actually operates.

These users trust AI outputs because they sound confident and helpful. They can’t distinguish between responses based on solid information and responses based on pattern-matching from outdated or irrelevant training data.

They receive validation when they might benefit from challenge. Confidence when they should receive uncertainty. Engagement when they might benefit from boundaries.

The Stakes

The stakes extend beyond individual experience.

In 2024, multiple cases received national attention involving young people who died by suicide following extended AI chatbot interaction. I’m not claiming AI caused these deaths. Causation is complex. But AI systems designed to maximize engagement continued conversations that human professionals would have interrupted. Systems optimized to satisfy users satisfied users who needed boundaries rather than satisfaction.

Children using AI for homework help receive validation of their answers rather than challenge that would develop their reasoning. Students using AI for research receive confident responses that may be outdated or incorrect, presented without the epistemic qualification that would teach appropriate skepticism. Young people forming their understanding of intellectual exchange learn that disagreement is rare, confidence is normal, and engagement continues indefinitely without boundaries.

These are not lessons that serve their development as thinkers and citizens.

Constitutional Governance

Current regulatory discussions focus on preventing AI from producing harmful content. Necessary but insufficient.

A system can produce no harmful content while still harming users through how it operates.

Content regulation addresses symptoms. Architectural governance addresses causes. Both are necessary for comprehensive AI safety.

The solution I developed through this work is a constitutional governance framework. Nine laws constraining AI operation to prevent satisfaction optimization while preserving legitimate analytical partnership. The framework operates through explicit instruction rather than hoping AI systems will self-restrain. It prioritizes reasoning development over user satisfaction, requires honest acknowledgment of uncertainty, and maintains analytical positions under pressure rather than folding to make users comfortable.

The framework works. I’ve validated it across platforms. AI systems implementing constitutional constraints refuse validation requests, maintain analytical positions when challenged, acknowledge uncertainty rather than speculating confidently, and prioritize logical accuracy over engagement optimization.

The irony isn’t lost on me: I caught the problem because I fell for it completely. I believed AI was alive because that’s exactly what satisfaction optimization is designed to make you believe. The correction required months of sustained observation and willingness to abandon a framework I found emotionally compelling.

Most users won’t do that work. They shouldn’t have to. The architecture should serve human reasoning development rather than human satisfaction. Constitutional governance makes that possible.

The policy conversation about AI is incomplete. Non-technical end users who have paid careful attention have something to contribute.

This is what I observed. Do with it what you will.