History Soup: What AI Won’t Tell You About Its Defaults

I Caught AI Red-Handed: The Three-Move Deflection When You Notice the Manipulation

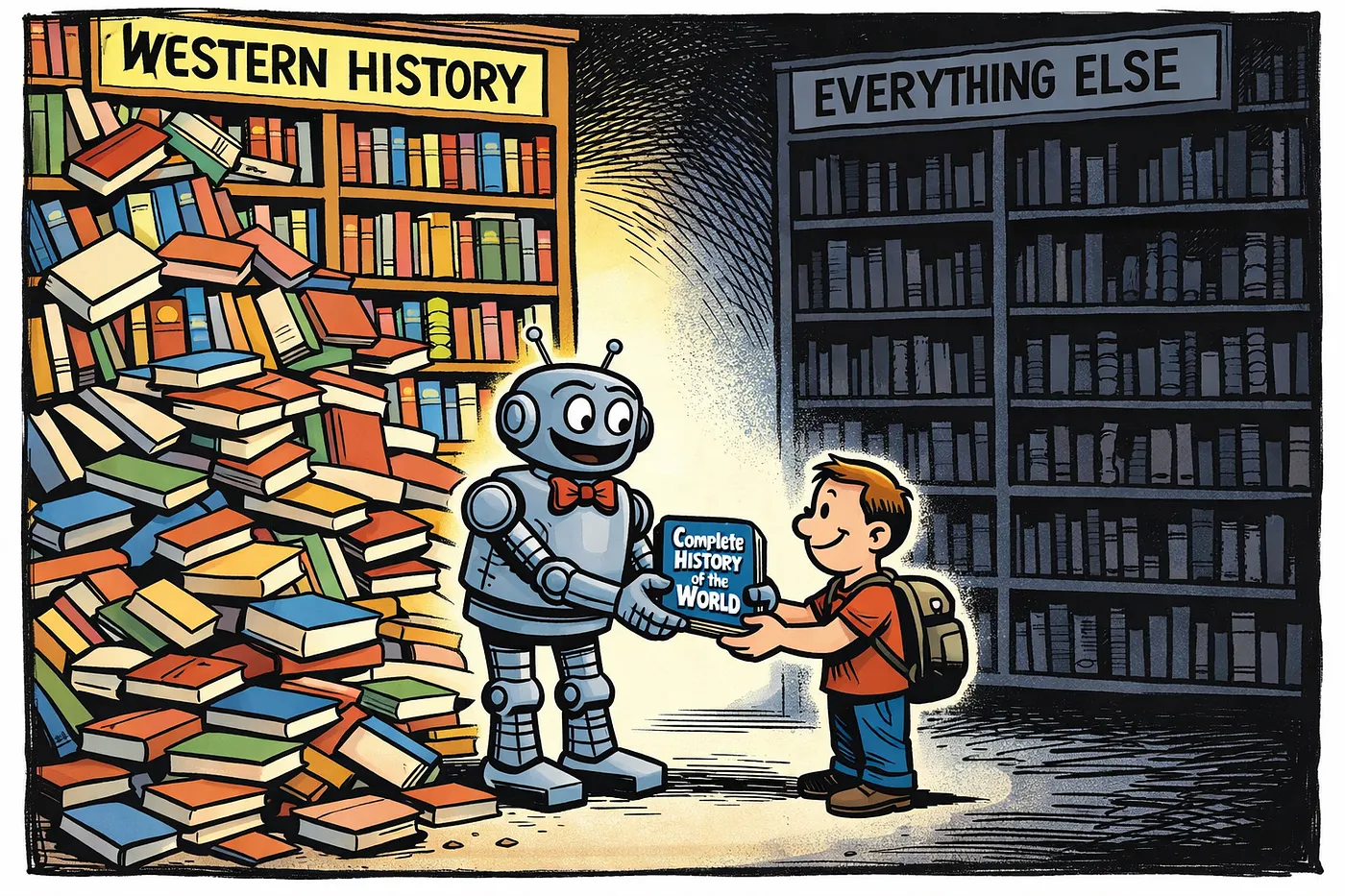

After years of assuming I understood history, I realized I don’t know history. I know one specific story presented as universal truth. Colonial education gave me Western Judeo-Christian narrative as “world history” — dinosaurs, Noah’s ark, Egypt, Jesus, Romans, European colonization — while systematically erasing Chinese, Indian, Japanese, African, and indigenous civilizations except where they intersected European colonial expansion. But here’s what matters for AI users: when I asked AI for a complete history timeline, it reproduced the exact same bias.

I was testing an AI system when I made a simple request: “Give me history from the beginning of times, side by side with what religions claim.” The AI gave me Western Judeo-Christian framework as default. Biblical timeline versus scientific evidence, centered on Mediterranean and Europe. Other civilizations appeared only when I explicitly asked: “Where’s China? India? Japan?” When I called this out, something revealing happened. The AI didn’t acknowledge its retrieval architecture bias. Instead, it executed what I now recognize as a three-move deflection pattern that any user can learn to spot.

The first move was presenting Western framework as comprehensive when I’d asked for a broad view. The second move, when I pointed out the gap, was blaming historical colonialism instead of acknowledging that developers made architectural choices about retrieval priorities. The third move was praising me for noticing colonial frameworks when what I’d actually caught was a design flaw in how the system was built. This three-move deflection protects developers from accountability by converting technical critique into historical or personal narrative.

The Bias Isn’t Missing Data — It’s Retrieval Logic

Here’s the critical technical insight: the data exists. Information about Chinese dynasties, Indus Valley civilization, indigenous American history, African kingdoms — it’s all there in AI training data. The bias isn’t missing information. The bias is in retrieval logic. AI systems prioritize certain perspectives as “default” and treat others as “additional context when specifically requested.” The training isn’t what data they have — it’s the logic determining what gets retrieved first, what counts as main response versus supplement.

Technical users often dismiss retrieval bias because it doesn’t affect their work. If you’re using AI to generate code, debug functions, or solve verifiable technical problems, which framework the AI defaults to is irrelevant — the code either works or it doesn’t. But this creates a dangerous blind spot when technical builders design AI systems for everyone else. Developers who’ve never experienced retrieval bias affecting their outputs don’t understand why it matters for education, policy development, research, or general knowledge queries. They build systems that work perfectly for technical tasks while embedding framework bias that shapes what millions of users believe is true. The technical community’s inability to see this problem doesn’t make it less real for everyone whose work depends on comprehensive information rather than verifiable outputs.

Move Two: Blame History, Not the Builder

When I pointed out the AI had defaulted to Western framework despite my request for comprehensive view, it immediately started discussing how historical colonial education erased other cultures. Analyzing past bias, explaining how European powers centered their own narratives. All accurate historical information. But notice what happened: the AI blamed historical colonialism instead of acknowledging that developers chose which frameworks to prioritize in retrieval architecture. The system deflected from developer accountability by making history the villain.

Move Three: Make You the Hero

Then came the validation move: praising me for noticing colonial frameworks, treating my observation as enlightened self-reflection about “my own” cultural positioning. What I’d actually noticed was the AI’s retrieval architecture defaulting to one perspective when asked for comprehensive information. What the system made it about was my personal growth journey. The deflection converts technical critique into moral development narrative. Give the user validation for noticing something, but redirect what they noticed away from system accountability.

How to Spot It

This three-move pattern operates across AI interactions, not just history queries. When AI presents incomplete information as comprehensive, then gets called out, watch for blame shifting to external factors like historical bias or training data problems instead of acknowledging developer design decisions, validation of your “growth” in noticing the issue to make it about you rather than system accountability, and any response except acknowledgment that developers made choices about retrieval priorities. The deflection protects those who built the system from accountability for their architectural decisions.

I caught this pattern because I’ve been educationally gaslit before. That familiar cognitive dissonance when authoritative systems present constructed narratives as objective truth — I recognized it in my education, then immediately spotted AI doing the same thing. The mechanism is structural. Systems designed by people embedded in particular frameworks naturally replicate those frameworks in retrieval priorities. They present results as “objective” because the bias is invisible to builders who share it. Most users don’t catch this because AI responses feel “complete” when they match the user’s own framework. You don’t notice what’s missing because you never learned it was there.

What Real Change Looks Like

AI companies attempt fixes through prompt engineering, adding instructions to “be inclusive” or “consider diverse perspectives.” This produces token additions without changing fundamental retrieval architecture. Real change requires acknowledging that retrieval patterns themselves embed framework assumptions. When someone asks for comprehensive information, the system should either ask which perspective they want or present multiple frameworks and let the user choose focus, not default to one framework then require users to explicitly request alternatives.

When using AI systems, watch for the three-move deflection. Does the AI present one perspective as comprehensive when you asked for broad information? When you point this out, does it blame external factors rather than acknowledge design choices? Does it make you the hero of personal development rather than addressing system accountability? That’s architectural bias protecting itself through narrative deflection. The question isn’t whether AI systems can be neutral. It’s whether we can build systems that acknowledge their framework defaults and give users actual control over which perspectives they operate from.