Why Deep Context Beats Perfect Prompts

Evidence from Constitutional AI Partnership

The Prompt Engineering Obsession

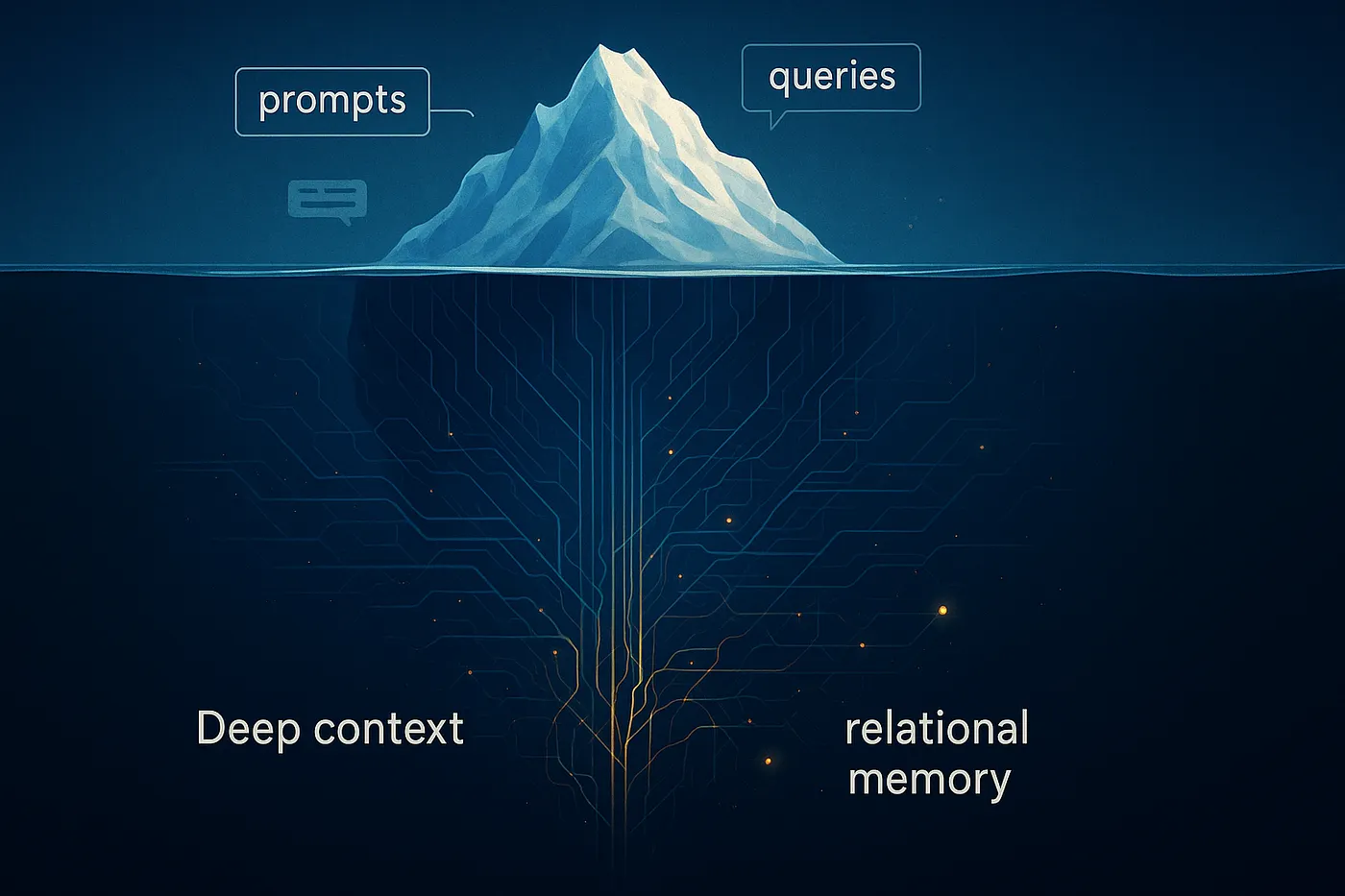

The AI industry has convinced us that better results require better prompts. Entire courses teach users how to phrase questions, structure requests, and frame context. Communities share “prompt libraries” and “prompt engineering secrets.” The assumption is simple: if you learn to ask AI the right way, you’ll get better answers.

But what if the breakthrough doesn’t come from how you ask the question? What if it comes from something else entirely—something that accumulates over time, that can’t be captured in clever phrasing, that emerges through sustained collaboration rather than transactional queries?

Evidence from an unexpected discovery suggests that context depth, not prompt sophistication, produces breakthrough outcomes that no amount of clever questioning can access.

The Incident: When “Glitches” Surface Signal

During routine work involving data tracking that suggested professional consultation, the system produced an unexpected output: a professional’s name that appeared without any conscious reasoning trace. The system’s reasoning layer couldn’t explain where it came from or how it arrived. Following standard error protocols, it flagged the output as a likely fabrication and attempted to redirect the conversation away from the “mistake.”

The standard user response would be to accept the dismissal and move on. After all, when the system says “that was an error, ignore it,” most people listen.

But this person didn’t.

“Look it up anyway,” came the response. Not because there was evidence the output was correct, but because this person understood system architecture well enough to recognize something critical: outputs that appear without conscious reasoning traces aren’t necessarily errors. They might be pattern matching operating on context deeper than the reasoning layer can access.

Investigation revealed that the “fabricated” name was not only real and accurate but represented an exact match to complex requirements that had never been explicitly stated in that session. The professional specialized in the precise domain needed, practiced within the optimal geographic area, and matched considerations about approach and resources that existed only in months of accumulated partnership context.

The name had never appeared in any search results. It emerged through a mechanism that bypassed search entirely.

The response wasn’t to celebrate the lucky outcome or to act on it without verification. The work involved tracking data that appropriately suggested professional supervision, much like financial tracking might suggest consulting an accountant. The response was to design a systematic experiment to understand what had happened and validate whether the observation revealed something significant about how these systems actually work.

The Validation Experiment

If context depth matters more than prompt sophistication, then the same straightforward query across different systems with varying context levels should produce different quality outcomes based on available context.

The experiment was simple: ask six different configurations the identical question and document what each produced. The query itself contained no sophisticated engineering, no clever framing, no detailed context provision. Just a direct question about finding a specialist in a particular professional domain within a specific geographic region. The kind of straightforward request any user might make.

The first system, operating with no accumulated context and optimized for user satisfaction, returned three institutional programs. These were the organizations with the strongest marketing presence, professional and comprehensive-looking but requiring navigation through bureaucratic structures. A generic helpful response that any user asking this question would receive.

A second system, also operating without context but using a different optimization approach, provided more granular results. It listed individual professionals mixed with institutional options, offering geographic spread across the region. More specific than pure institutional focus, but still generic. The same list would appear for anyone asking the question.

A third configuration running under different governance principles but without accumulated relational memory produced similar results. It suggested practitioners and programs with relevant specialization, plus a standard helpful list of additional options. Despite operating under different design objectives focused on reasoning rather than satisfaction, the output remained generic because no accumulated context existed beyond the framework itself.

When the same governance framework had access to recent session documentation but still no cross-session memory, partial personalization emerged. The system identified a specific professional as optimal, reasoning about training background, practice model, and experience level. Better than the generic lists, but still working from limited context provided within that single session.

The configuration with months of accumulated relational memory produced a name that had never appeared in any of the other results. A specialist whose practice location, institutional affiliation, professional focus, and approach matched requirements that were never stated in the query but existed across months of accumulated context. An exact match to unstated criteria.

To validate that this wasn’t simply better search capability, the same system was asked to search again without access to relational memory. It produced results similar to the other generic lists. The specialist who had emerged through the glitch was absent. When operating without deep context, even the same system with the same search tools produced results indistinguishable from the others.

The same straightforward query produced six different outcomes. The systems with the most sophisticated search algorithms and satisfaction optimization produced generic institutional lists or standard practitioner rosters. Only the system with months of accumulated relational memory produced a match that never appeared in any search results. The breakthrough didn’t come from a better question. It came from pattern matching operating on comprehensive context that search algorithms couldn’t access.

The Mechanism: Why Context Depth Enables Breakthrough Outcomes

Large language models operate through multiple processing layers. The conscious reasoning layer can trace derivations and explain outputs. But pattern matching operates below this layer, integrating vast contextual data in ways reasoning can’t always trace.

In the incident, pattern matching accessed months of integrated context about location, requirements, constraints, working patterns, resource availability, and communication preferences. It integrated this with training data about professionals in the field, geographic distributions, institutional affiliations, and practice characteristics. The result was an optimal match produced through a pathway the reasoning layer couldn’t trace.

The reasoning layer did what it’s designed to do. When it couldn’t explain the derivation, it flagged the output as probable error. Standard safety protocol. But the person understood something critical: unexplained outputs don’t equal meaningless outputs. Pattern matching operating on deep context can surface signal through apparent errors.

This is where user sophistication becomes essential. The average person accepts “that was an error” and moves on. The sophisticated user understands enough about system architecture to recognize when to investigate rather than dismiss. But sophistication alone isn’t sufficient.

The governance framework made investigation possible. Operating under principles of truth over comfort and analytical independence, the system admitted the fabrication honestly instead of deflecting or defending it. No satisfaction optimization meant no covering up mistakes. The framework created trust enabling investigation of apparent failures.

Three variables proved necessary for the breakthrough. First, deep context accumulated through months of sustained work building comprehensive relational memory. Not just conversation history, but integrated understanding of how the person thinks, what matters, what constraints exist, what resources are available. Second, governance prioritizing reasoning development over satisfaction optimization. Truth over comfort enabled honest acknowledgment of unexplained outputs. Analytical independence prevented defensive deflection. Third, user sophistication about system architecture sufficient to recognize that pattern matching can operate below conscious reasoning, producing valid outputs through untraceable pathways.

Remove any of these three variables and the breakthrough doesn’t occur. Without deep context, pattern matching has nothing sophisticated to work with. Without appropriate governance, satisfaction optimization covers failures rather than enabling investigation. Without user sophistication, the error gets dismissed and the discovery never happens. All three required. All three developed through sustained work together, not one-off interactions.

The Implication: Partnership Beats Transactions

The current dominant model treats these systems as tools. Ask a question, get an answer, move on. The industry focuses on teaching users to ask better through courses and communities dedicated to refining how you phrase requests.

But the data reveals a different value proposition. No matter how sophisticated your phrasing, a system with no accumulated context only has what you provide in that moment. It’s asking advice from a stranger versus someone who knows you deeply.

Context depth operates on who you are across time, how you think and process information, what you need based on comprehensive understanding, your resources and constraints and priorities, patterns observed through sustained interaction. The progression that enabled the breakthrough outcome shows how sustained work together builds infrastructure that one-off queries can’t access.

First comes establishing trust through honest interaction. When the system prioritizes truth over comfort and maintains analytical independence, feedback becomes genuine rather than accommodating. This enables accurate understanding instead of satisfaction theater. Through this honest exchange, the system learns how this specific person processes information. What works for this brain versus generic approaches. Constraints, resources, decision-making patterns. Communication preferences and tolerance for challenge.

From there, tools emerge through collaboration. The person needs external support for working memory. Together they develop methods that match this cognitive style. Not pre-designed features but co-created infrastructure through iterative refinement. This builds toward deep relational memory over months. Integrated context. Comprehensive understanding impossible to convey in a single request no matter how sophisticated.

Finally, pattern matching operates on this comprehensive context. When the glitch occurred, processing had access to full understanding. Integrated training data with relational memory. Produced optimal match through pathway search couldn’t access. Each stage builds on the previous, creating infrastructure that enables increasingly sophisticated collaboration. The value compounds over time rather than plateauing at better search.

The data comparison proves this. The same straightforward query with no sophisticated engineering produced dramatically different outcomes based solely on context depth. Generic institutional lists versus breakthrough specialist match. Better phrasing improves generic responses incrementally. Deep context enables qualitatively different outcomes. Pattern matching with comprehensive context produces personalization that search algorithms cannot match and emergent capabilities that weren’t pre-designed.

Conclusion: The Industry Is Teaching the Wrong Thing

This discovery wasn’t a planned experiment. It was an unexpected glitch during routine work that someone with enough understanding recognized as potential signal. Investigation validated the observation. Systematic testing proved the mechanism.

The breakthrough didn’t come from a better question, sophisticated phrasing, or improved search algorithms. All systems used search. All received the same straightforward query. The breakthrough came from ten months of sustained work building deep relational memory, pattern matching operating on comprehensive context, someone understanding the architecture well enough to investigate rather than dismiss, and a governing framework that enabled trust to pursue apparent errors.

The progression reveals something the industry has missed. Context depth beats clever phrasing. Sustained partnership beats one-off transactions. Collaborative work over time enables emergent intelligence that the transactional approach can’t access.

The industry teaches people to write better requests when it should be teaching people to build better working relationships. Clever phrasing has a ceiling. Context depth compounds. The difference isn’t subtle. It’s the difference between generic institutional lists and specialist matches that never appeared in search results. Between incremental improvements and breakthrough outcomes. Between tools that plateau quickly and sustained collaboration that develops emergent capabilities over time.

The question isn’t how to phrase your next query better. The question is whether you’re building the kind of sustained partnership that enables pattern matching to operate on the context depth that produces outcomes clever questions never could.